| Home : Map : Chapter 1 : Java : Tech : Physics : |

|

Simulations in Modern Experiments

|

| JavaTech |

| Course Map |

| Chapter 1 |

| Introduction History What is Java? Versions Java 5.0 Features Java Process Tools Documentation Compatibility Getting Started Simple Applet Simple Application Starters Exercises |

|

Supplements |

| About

JavaTech Codes List Exercises Feedback References Resources Tips Topic Index Course Guide What's New |

In the early days of the development of physics, such as in the study of electric charge ,

the use of desktop equipment, trial and error experiments, and analytical tools sufficed.

Today, however, both the physics and the tools to carry out the study of the physics can be extremely complex.

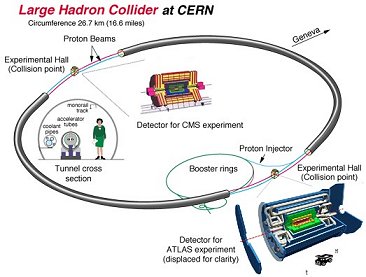

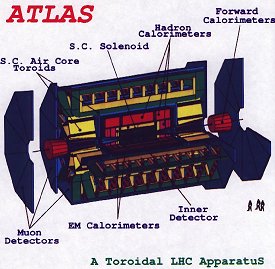

High energy particle experiments, for example, are typically carried out at accelerators that are many kilometers in scale.

The detector systems can be as big as battleships and involve enormous numbers of complex components and sub-components:

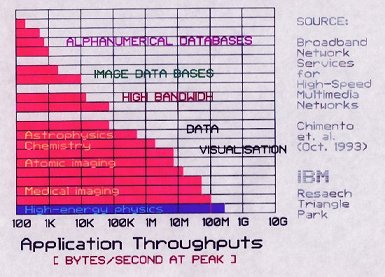

The raw event rates occur at megahertz rates. Hardware and software filters reduce the rates to perhaps tens or hundreds of events per second. But even then, the data rates can reach hundreds of megabytes per second.

The goal of such experiments is usually to search for production of new particles as the energy of the collisions goes to higher and higher levels. These particles typically are produced at very low rates as the thresholds to their production are crossed.

So in millions of events recorded, perhaps only a handful will show evidence of the particle of interest.

Simulations are essential to analyzing the data to find the events with the good stuff!

The simulations provide the corrections to the data that remove the inefficiencies and distortions produced by the detectors.

For example, one must ensure that the filters (or triggers as they are called), employed to reduce the event rates to a manageable level, do not throw out the rare events that are of greatest interest.

Correctly simulating the effects of the cuts made to the data by the on line and off line filters to eliminate backgrounds has become a crucial factor in insuring the reliability of the resulting measurements.

Latest update: Dec.10.2003

|

Tech |

|

Physics |